niftynet.network.dense_vnet module¶

-

DenseVNetDesc¶ alias of

DenseVNetParts

-

class

DenseVNet(num_classes, hyperparameters={}, architecture_parameters={}, w_initializer=None, w_regularizer=None, b_initializer=None, b_regularizer=None, acti_func='relu', name='DenseVNet')[source]¶ Bases:

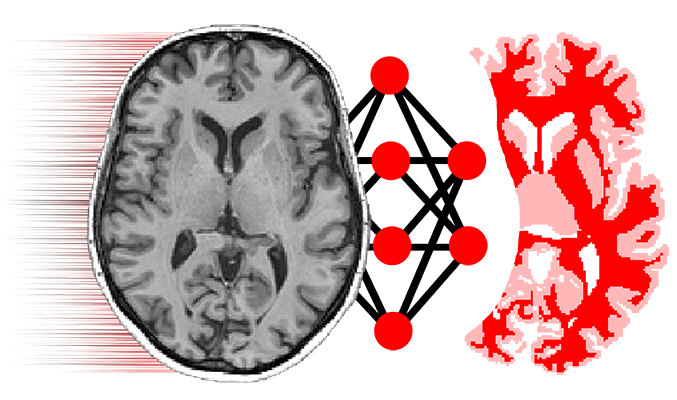

niftynet.network.base_net.BaseNet- implementation of Dense-V-Net:

- Gibson et al. Automatic multi-organ segmentation on abdominal CT with dense V-networks

### Diagram

DFS = Dense Feature Stack Block

- Initial image is first downsampled to a given size.

- Each DFS+SD outputs a skip link + a downsampled output.

- All outputs are upscaled to the initial downsampled size.

- If initial prior is given add it to the output prediction.

- Input

- –[ DFS ]———————–[ Conv ]————[ Conv ]——[+]–>

- | | |

- —–[ DFS ]—————[ Conv ]—— | |

- | |

- —–[ DFS ]——-[ Conv ]——— |

- [ Prior ]—

The layer DenseFeatureStackBlockWithSkipAndDownsample layer implements [DFS + Conv + Downsampling] in a single module, and outputs 2 elements:

- Skip layer: [ DFS + Conv]

- Downsampled output: [ DFS + Down]

-

DenseFSBlockDesc¶ alias of

DenseFSDesc

-

class

DenseFeatureStackBlock(n_dense_channels, kernel_size, dilation_rates, use_bdo, name='dense_feature_stack_block', **kwargs)[source]¶ Bases:

niftynet.layer.base_layer.TrainableLayerDense Feature Stack Block

- Stack is initialized with the input from above layers.

- Iteratively the output of convolution layers is added to the stack.

- Each sequential convolution is performed over all the previous stacked channels.

Diagram example:

stack = [Input] stack = [stack, conv(stack)] stack = [stack, conv(stack)] stack = [stack, conv(stack)] … Output = [stack, conv(stack)]

-

DenseSDBlockDesc¶ alias of

DenseSDBlock

-

class

DenseFeatureStackBlockWithSkipAndDownsample(n_dense_channels, kernel_size, dilation_rates, n_seg_channels, n_downsample_channels, use_bdo, name='dense_feature_stack_block', **kwargs)[source]¶ Bases:

niftynet.layer.base_layer.TrainableLayerDense Feature Stack with Skip Layer and Downsampling

- Downsampling is done through strided convolution.

- —[ DenseFeatureStackBlock ]———-[ Conv ]——- Skip layer

——————– Downsampled Output

See DenseFeatureStackBlock for more info.

-

class

AffineAugmentationLayer(scale, interpolation, boundary, transform_func=None, name='AffineAugmentation')[source]¶ Bases:

niftynet.layer.base_layer.TrainableLayerThis layer applies a small random (per-iteration) affine transformation to an image. The distribution of transformations generally results in scaling the image up, with minimal sampling outside the original image.

-

__init__(scale, interpolation, boundary, transform_func=None, name='AffineAugmentation')[source]¶ ” scale denotes how extreme the perturbation is, with 1. meaning

no perturbation and 0.5 giving larger perturbations.- interpolation denotes the image value interpolation used by

- the resampling

boundary denotes the boundary handling used by the resampling transform_func should be a function returning a relative transformation (mapping <-1..1,-1..1,-1..1> to <-1..1,-1..1,-1..1> or <-1..1,-1..1> to <-1..1,-1..1>)

-

spatial_dims¶

-

-

class

Affine2DAugmentationLayer(scale, interpolation, boundary, transform_func=None, name='AffineAugmentation')[source]¶ Bases:

niftynet.network.dense_vnet.AffineAugmentationLayerSpecialization of AffineAugmentationLayer for 2D coordinates

-

spatial_dims= 2¶

-

-

class

Affine3DAugmentationLayer(scale, interpolation, boundary, transform_func=None, name='AffineAugmentation')[source]¶ Bases:

niftynet.network.dense_vnet.AffineAugmentationLayerSpecialization of AffineAugmentationLayer for 3D coordinates

-

spatial_dims= 3¶

-